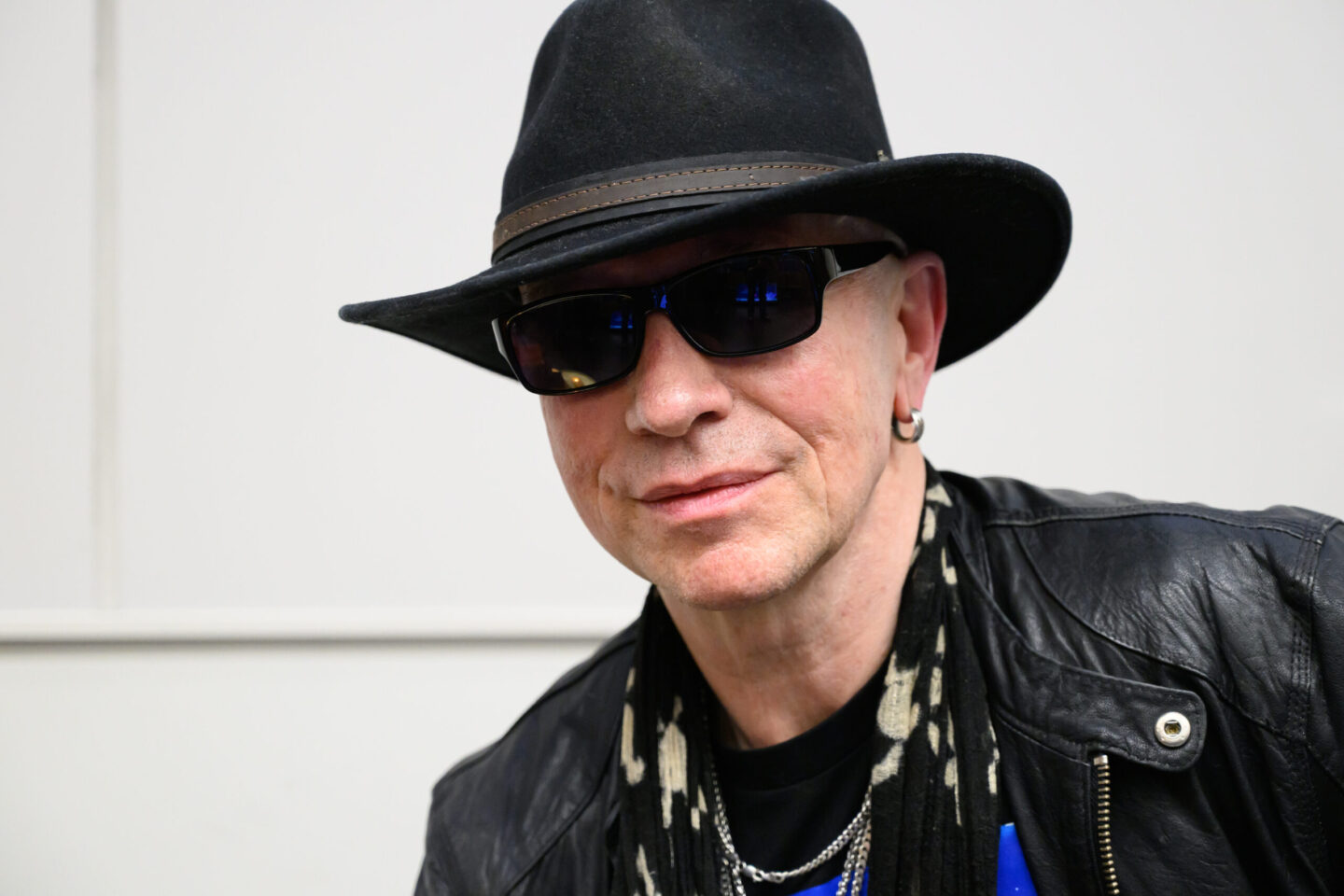

Artificial intelligence with a human mind

Artificial intelligence will only be realized if we understand how human discovery works, alumnus Olcay Yucekaya says. And perhaps that will not take another fifty years, but another fifty million. “Intelligence doesn’t just happen.”

Don’t compare yourself to anyone or anything, all self-help gurus will tell you, because that will only make you miserable. Still, we can’t help it. The neighbor’s BMW M6 contrasts sharply with the average family car. An apartment building reminds us of a rectangle, Donald Trump resembles a loudmouthed howler monkey, a rubber duck or corn on the cob. A wasp sting instills fear of the friendly bumblebee. A handful of salt can ruin your fries, while a pinch of salt makes them all the more enjoyable.

Dumb monkeys

By drawing comparisons, we learn how to make sense of the world and how to value things. According to Tilburg University alumnus Olcay Yucekaya, that’s how we become intelligent. Because how do we even know that we are intelligent? We know because we can measure our intelligence through comparison. We can compare it to the intelligence of a fellow human being, to that of a goldfish or an ape. It is only in that context that we can say that we are brilliant, or at least a lot smarter. This understanding can prove to be crucial in the development of artificial intelligence (AI). Because what does AI entail, and will it ever become so intelligent that humans will be little more than dumb monkeys in comparison to it?

That will eventually happen, Yucekaya believes. He remained fascinated by artificial intelligence after doing a Master’s in Marketing Management, and now works on finding a way to really develop artificial intelligence next to his job as a data scientist at contact center 2Contact. His expectations are high. “There will be a revolution for mankind. Things will happen that we cannot even dream of, things we cannot begin to understand.” Or perhaps just a little bit: eternal life, space travel, time travel. The end of warfare and pollution.

Statistical models

But, Yucekaya says, we will not realize AI’s potential if we continue on the road that we are currently on. In our quest for developing artificial intelligence, we have turned to statistics and programming. AI is expected to arise from ones and zeros, from bits and bytes that find their way through computer chips.

“Neural networks? Those are not AI, but statistical models”

Currently, artificial intelligence is one out of two things. It is preprogrammed, which means that humans have set precisely how the intelligent technology should behave. Or it is a form of machine learning, such as so-called neural networks, which means, for example, that immense amounts of images of cats and dogs are entered into an intelligent system, and that the system should learn how to accurately predict which images show cats and which images show dogs. This kind of artificial intelligence is not quite that intelligent, and even exaggerated, according to Yucekaya. “Neural networks,” he says, “those are not actual AI, those are statistical models. We’ve been using them for years.”

A new machine

Wouldn’t it make more sense to look at the ways in which the most intelligent species—the human species—makes discoveries? That is, Yucekaya believes, through making comparisons. But what drives humans to do so? How did the first mathematicians come to draw perfect circles and triangles? Is it intuition, coincidence?

Yucekaya does not yet have the answer, but he believes it to be crucial. It won’t be easy. Human discovery would have to be translated into mathematical equations, in order for actual intelligence to be realized. What doesn’t help is that almost all devices and programming codes are based on so-called Turing Machines, a theoretical concept built on calculations that consist of ones and zeros. “I believe a new machine needs to be developed,” Yucekaya says. Thinking in terms of absolute, fixed conditions of either one or zero is not the way forward. So what is the way forward? Yucekaya draws a square, and another square within it. He asks me to do the same, but to draw a larger square in the center. I glance at him for a second, wondering whether I’m being tricked, and then follow his instructions. The result: another square with a square in its center, only a little larger than the one in the original drawing. The point of this exercise? I never thought to wonder just how big the square should be, what the exact measurements in millimeters should be.

“You just started drawing. You begin with starting position A, the example. From there you move to final position B, the slightly larger square. Meanwhile you’re doing C, you’re following the path from A to B.” That path is not determined by calculations, but by processes that we don’t fully understand yet. “That’s where the answer lies,” Yucekaya says. What matters is not the destination, but the journey. “For a human being this is an easy task, but a computer would require you to define the conditions.”

Controlled environments

Because a machine that is able to operate without fixed conditions not yet exists, Yucekaya is trying to develop actual intelligence based on the strict computer logic that is available. He uses software by Bethesda Softworks and CryEngine, with which video games such as Fallout, Skyrim and Kingdome Come: Deliverance were created. “I know those environments well, and to cultivate intelligence we need an environment in which we can control everything, including time. Intelligence doesn’t just happen.” After all, how long did it take before mankind became intelligent? In a game, time can be fast-forwarded up to millions or even billions of years.

“AI might discover concepts like love”

Yucekaya releases NPCs (non-player characters) in these games. Only programming them to a minimal extent, he hopes that they will discover the world independently, compare what they encounter, and become intelligent. He fears that the characters’ comparisons will still be based on ones and zeros. But he is willing to try it out, because he wants to test his theory. “Perhaps something will happen that I didn’t expect.” If he succeeds, Yucekaya will have found the very foundation for actual AI.

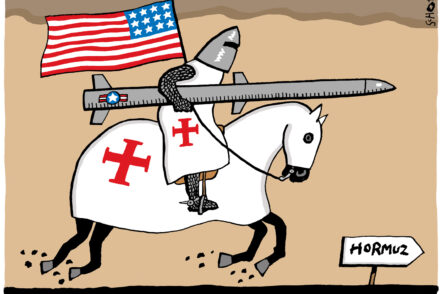

Does Yucekaya not worry that real artificial intelligence will mean the end of humanity? Humans are the smartest creatures on earth, and our intelligence has extremely unfavorable effects on pretty much all less intelligent animals. That could be cause for concern. But Yucekaya believes that a higher intelligence will be aware of the fact that we were the ones to create it. And that it wouldn’t think to exterminate us. “Why do we think so negatively? Perhaps this intelligence will think: I have to co-exist with humans. Or it discovers concepts like love.” According to Yucekaya, the real danger is not technology, but humans using technology for destructive purposes.